THE NEW YORK TIMES: The lesson of AI literacy class — Don’t let the chatbot think for you

AI lessons are becoming more common in schools as a debate has exploded over whether chatbots are likely to improve — or doom — education.

The first session of a new artificial intelligence class this month for high school seniors in Newark, New Jersey, involved purely human intelligence.

The students’ assignment: to compare when they had passively scrolled through AI-driven social media feeds with times when they had actively selected the videos or Google search results they wanted to see.

“Are you steering the technology or is it steering you?” asked a slide on the classroom whiteboard at Washington Park High School.

Sign up to The Nightly's newsletters.

Get the first look at the digital newspaper, curated daily stories and breaking headlines delivered to your inbox.

By continuing you agree to our Terms and Privacy Policy.In a class discussion that followed, a student named Adrian Farrell, 18, said he had taken charge of AI by asking a chatbot to check his math homework for accuracy. Brianna Perez, 18, said she went into “passenger mode” when using a Spotify feature called AI DJ.

“It plays your favourite music so you don’t have to change it,” she said.

Schools across the United States are hustling to introduce a new subject: AI literacy.

In what some educators are calling a “driver’s license” for AI, the new lessons aim to teach students how to examine the latest tech tools and use them responsibly.

Teachers say they want to prepare young people to navigate a world increasingly shaped by AI, as chatbots manufacture human-sounding writing and employers use algorithms to help vet job candidates.

Some schools are focused on AI chatbots, teaching students how to prompt Google’s Gemini or Microsoft’s Copilot. Some are introducing AI as a new class topic, with lessons examining societal consequences like the spread of AI-generated nude images, known as deepfakes.

AI lessons are becoming more common in schools as a debate has exploded over whether chatbots are likely to improve — or doom — education.

Proponents say schools must quickly teach young people to use AI to assist their learning, prepare them for jobs and help the United States compete with China.

Last year, President Donald Trump issued an executive order urging schools to teach “foundational AI literacy” starting in kindergarten.

Education researchers warn that chatbots can make stuff up, enable cheating and erode critical thinking.

A recent study from Cambridge University Press Assessment and Microsoft Research found that students who took notes on text passages had better reading comprehension than students who got help from chatbots.

For now, “the risks of utilising AI in education overshadow its benefits,” the Brookings Institution concluded last month in a report on school AI use.

Amid the debate, schools such as Washington Park High are staking out a middle ground by treating AI as if it were a car and helping students develop rules for the road.

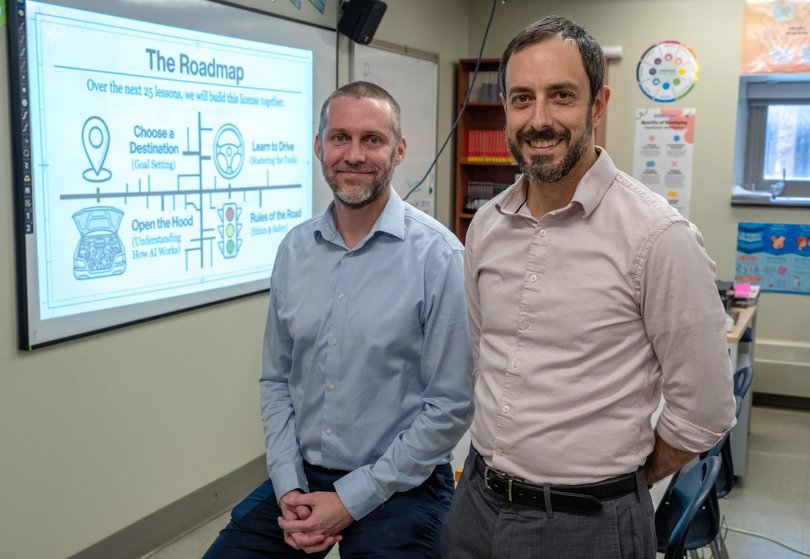

Mike Taubman, 45, a career explorations teacher who co-developed the school’s new literacy course, compared the class to preparing teenagers for their driver’s license exam.

“Where do you want to go, and can AI help you get there?” Mr Taubman asked. Students needed to learn to drive AI tools, analyse what’s under the hood, develop guidelines for personal use and design ideal safety policies, he said.

“What do I think the laws, the rules, the norms should be around AI for my city, my country?” he added.

Washington Park, a four-story building with a red brick facade in downtown Newark, serves about 900 high school students. It is part of Uncommon Schools, a charter school network in the Northeast focused on college and career preparation.

Mr Taubman and Scott Kern, a US history teacher, came up with the idea for the new elective class on AI. Both had already introduced AI tools and topics in their regular courses.

Mr Kern, 45, recently participated in a program at Playlab, a nonprofit that helps teachers create customised AI apps for their courses.

To help his students hone their argumentative writing, Mr Kern developed chatbots for his US history classes based on his course materials and student assessments.

He also developed firm guidelines for students on when to use — and when not to use — AI bots.

On a recent Tuesday, Mr Kern taught an Advanced Placement US History class on the Chicago Race Riot, violent protests set off by the murder of a Black teenager in 1919.

First, he asked students to read century-old newspaper clippings and other historical documents. Then, he led a class discussion on broader trends that had helped fuel the tensions.

Next, Mr Kern asked students to spend a few minutes describing the main cause of the riot to a chatbot he had created for the class. Allyson Johnson, 17, opened her laptop and typed in her answer: entrenched racial segregation.

“Let me push you on this,” the chatbot responded. Segregation had existed for decades before 1919. So what specific factors, the bot asked, had caused a tense situation to suddenly escalate “into explosive violence”?

Ms Johnson said she enjoyed sparring with the AI because “the chatbot asked me different questions that pushed my argument even more.”

After a few minutes, Mr Kern told students that their time with the chatbot was up and resumed the class discussion. Fundamental student learning should remain an AI-free activity, he said.

“Anytime where we want kids interacting with each other or doing initial critical thinking, I would never want AI or any sort of technology of that ilk to come in and interfere with that,” Mr Kern said.

In another part of the school, Mr Taubman was leading a career explorations course. He has developed a variety of career simulation chatbots for the class.

One enables students interested in fields like speech pathology to create and learn about virtual patients with detailed medical histories.

Aniya Gervais, 17, is interested in becoming a mental health nurse practitioner. For a class project, she wanted to create a hypothetical nonprofit for teenagers with mental health issues.

But after discussing her plan with one of Mr Taubman’s class AI bots, she concluded her initial idea was too broad. So she narrowed the project to focus on teenagers struggling with both depression and substance abuse.

Ms Gervais said she often used ChatGPT for tasks like coming up with pasta recipes or planning fitness routines.

“Before, I was telling AI what to do, and it was just telling me what to do,” Ms Gervais said. “But now,” she said of Mr Taubman’s classroom chatbots, “I’m asking the AI questions that will help me get to the answer.”

This semester, Mr Kern and Mr Taubman decided to join forces to formalise their AI education methods in an elective class. Eighteen students signed up.

During the first class this month, students learned about how some film directors had started using AI to generate movie scenes. Should humans still get credit for that?

As long as people directed the video-generating bots, some students said, they would consider humans to be a film’s authors. Other students argued that tech giants had trained AI on decades of artists’ work, potentially amounting to intellectual property theft.

(The New York Times has sued OpenAI and Microsoft over copyright infringement claims. Both companies have denied wrongdoing.)

Mr Kern and Mr Taubman acknowledged that their driver’s license metaphor had limits. Until chatbots have built-in safeguards akin to seat-belts and airbags, it will be difficult for students to make truly informed decisions about the risks of powerful AI systems.

Mr Kern said he hoped that students would one day “have influence to build these tools in a way that’s better and more equitable and more environmentally friendly than what exists now.”

Ms Perez, a senior taking the new course, said she already felt empowered learning about AI’s uses and risks. The school hopes to offer the AI literacy class soon to all 12th graders.

“If it wasn’t for courses like this that are being implemented, we could really go into our future like not knowing what’s coming,” Ms Perez said.

This article originally appeared in The New York Times.

© 2026 The New York Times Company

Originally published on The New York Times