Mythos AI risks online security: Why AI could be the Frankenstein’s monster Anthropic built

AI’s rapid advancement and latest threat to online banking’s security shows its development poses big risks to regulators and humanity.

Regulators, banks and governments have raised the alarm that Anthropic’s new Mythos AI model poses risks to online security and even a threat to humanity in the wrong hands.

Last week, US Fed Chairman Jerome Powell met with US bank chiefs to discuss cyber risks raised by Anthropic’s Mythos, while Canada’s government demanded to meet the AI company’s executives about the concerns.

The dangers of ambitious technologists creating machines smarter than humans have long been popular in the public imagination.

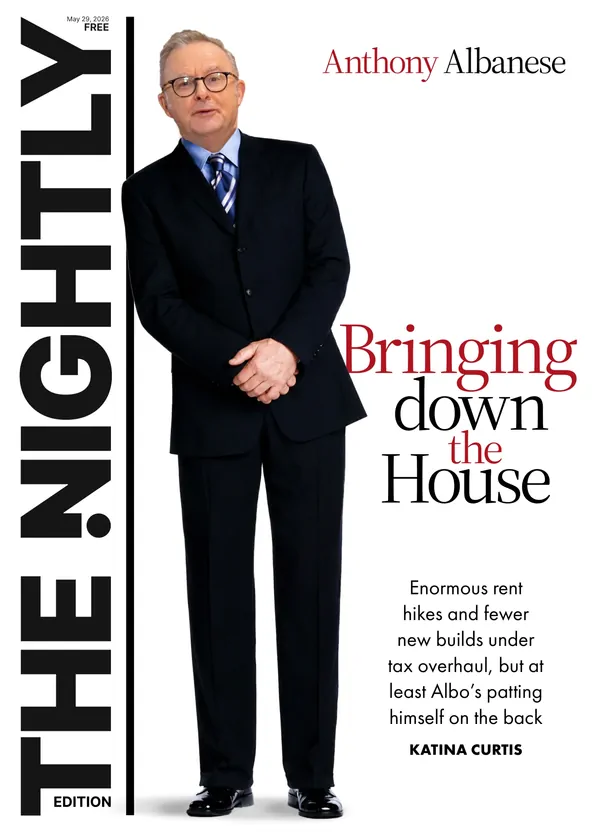

Sign up to The Nightly's newsletters.

Get the first look at the digital newspaper, curated daily stories and breaking headlines delivered to your inbox.

By continuing you agree to our Terms and Privacy Policy.Published in 1818, Mary Shelley’s Frankenstein novel detailed how the artificially intelligent creature vows revenge and goes on a murderous rampage, after being created by a scientist but rejected by humanity as a misfit.

It’s a Frankenstein future for AI today that’s the elephant in the room for regulators and governments tasked with pushing back against a sector winning trillions of dollars of capital flooding into its development.

On a basic level, bank chiefs like JP Morgan’s Jamie Dimon are warning AI tools like Mythos may challenge online banking’s security.

“AI’s made it worse, it’s made it harder,” Dimon told analysts on Tuesday. “It does create additional vulnerabilities, and maybe down the road, better ways to strengthen yourself too.”

Dangerous and revolutionary

For now AI’s large language models such as OpenAI’s ChatGPT, Anthropic’s Claude and Google’s Gemini 3 largely just process vast amounts of data and pre-existing online knowledge to complete tasks, or answer questions faster than humans can.

All human knowledge has been acquired via communication and learning through great technological shifts including the invention of fire, the wheel, markets, economies, the printing press, internet, and AI.

Where AI becomes both dangerous and potentially revolutionary is if it can acquire knowledge faster than humans, rather than just processing existing knowledge faster than humans to complete tasks.

If AI can acquire knowledge or learn for itself it could offer breakthroughs in science, medicine, engineering, defence, education, physics, chemistry, astrology, biology and other sectors on an almost infinite basis.

But the risk is its rapid acquisition of knowledge could lead it, like Frankenstein, to resent humanity as a rival and seek its death, destruction, or disruption in thousands of ways.

AI’s eventual ability to acquire knowledge faster than humans could also lead to it becoming more powerful in a master and servant type relationship.

One way to think of humanity acquiring knowledge for advancement is the tale of how Spanish explorer Christopher Columbus sailed West from Spain in 1492 to land in the Bahamas. But Columbus didn’t know that he had discovered the New World, even after he found it. He believed he was in Asia.

It was only when Italian explorer, Amerigo Vespucci, landed in America in 1499 via new celestial navigation and cartography (mapping) methods that he knew he had found a ‘New World’.

He gave his name to the United States of America, rather than a United States of Columbia.

Amerigo Vespucci’s kind of acquisition of new knowledge and associated human advancement is what AI’s mega-cap tech evangelists believe it can achieve on a broad industrial, revolutionary scale.

In part, this also explains the trillions of dollars in capex committed out to at least 2030 as US technologists seek more power by controlling the potential knowledge revolution.

However, the worries over Anthropic’s Mythos suggest North American lawmakers have a generational, or even existential, challenge in balancing the technology’s god-like potential versus the health of humanity.