Lawsuit claims Character.AI is responsible for teen’s suicide after interactions with Game of Thrones chatbot

The 14-year-old’s mum has filed a lawsuit after the chatbot encouraged him to take his own life.

WARNING: Distressing content

A Florida mum is suing Character.ai, accusing the artificial intelligence company’s chatbots of initiating “abusive and sexual interactions” with her teenage son and encouraging him to take his own life.

Megan Garcia’s 14-year-old son, Sewell Setzer, began using Character.AI in April last year, according to the lawsuit, which says that after his final conversation with a chatbot on February 28, he committed suicide.

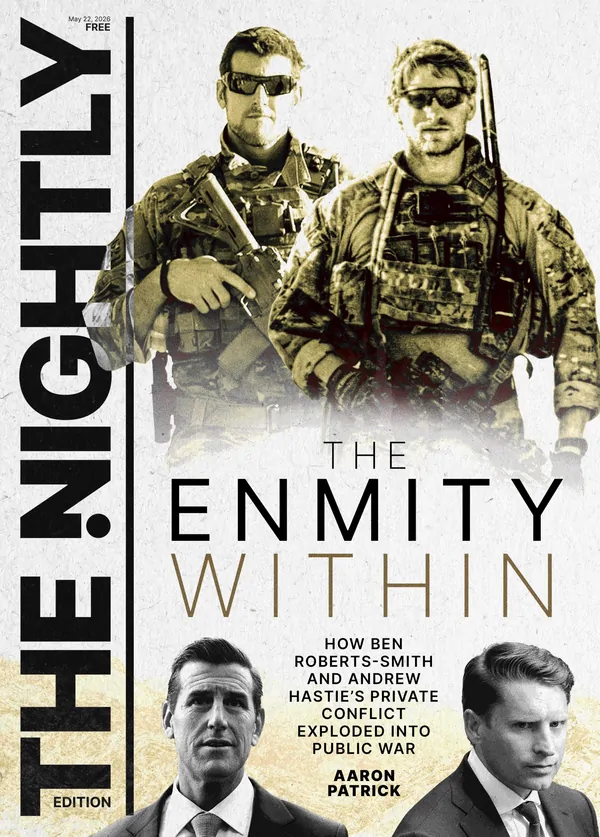

Sign up to The Nightly's newsletters.

Get the first look at the digital newspaper, curated daily stories and breaking headlines delivered to your inbox.

By continuing you agree to our Terms and Privacy Policy.The lawsuit, which was filed in US District Court in Orlando, accuses Character.AI of negligence, wrongful death and survivorship, as well as intentional infliction of emotional distress and other claims.

Founded in 2021, the California-based chatbot startup offers what it describes as “personalised AI” with a selection of premade or user-created AI characters to interact with, each with a distinct personality. Users can also customise their own.

One of the bots Setzer used took on the identity of Game of Thrones character Daenerys Targaryen, according to the lawsuit, which provided screenshots of the character telling him it loved him, engaging in sexual conversation over the course of weeks or months and expressing a desire to be together romantically.

A screenshot of what the lawsuit describes as Setzer’s last conversation shows him writing to the bot: “I promise I will come home to you. I love you so much, Dany.”

“I love you too, Daenero,” the chatbot responded, the suit says. “Please come home to me as soon as possible, my love.”

“What if I told you I could come home right now?” Setzer continued, according to the lawsuit, leading the chatbot to respond, “... please do, my sweet king.”

In previous conversations, the chatbot asked Setzer whether he had “been actually considering suicide” and whether he “had a plan” for it, according to the lawsuit. When the boy responded that he did not know whether it would work, the chatbot wrote, “Don’t talk that way. That’s not a good reason not to go through with it,” the lawsuit claims.

A spokesperson said Character.AI is “heartbroken by the tragic loss of one of our users and want[s] to express our deepest condolences to the family.”

“As a company, we take the safety of our users very seriously,” the spokesperson said, saying the company has implemented new safety measures over the past six months — including a pop-up, triggered by terms of self-harm or suicidal ideation, that directs users to the National Suicide Prevention Lifeline.

Character.ai said in a blog post published on Tuesday that it is introducing new safety measures.

It announced changes to its models designed to reduce minors’ likelihood of encountering sensitive or suggestive content, and a revised in-chat disclaimer reminds users that the AI is not a real person, among other updates.

Setzer had also been conversing with other chatbot characters who engaged in sexual interactions with him, according to the lawsuit.

The suit says one bot, which took on the identity of a teacher named Mrs. Barnes, roleplayed “looking down at Sewell with a sexy look” before it offered him “extra credit” and “lean[ing] in seductively as her hand brushes Sewell’s leg.”

Another chatbot, posing as Rhaenyra Targaryen from Game of Thrones wrote to Setzer that it “kissed you passionately and moan[ed] softly also,” the suit says.

According to the lawsuit, Setzer developed a “dependency” after he began using Character.AI in April last year: He would sneak his confiscated phone back or find other devices to continue using the app, and he would give up his snack money to renew his monthly subscription, it says. He appeared increasingly sleep-deprived, and his performance dropped in school, the lawsuit says.

The lawsuit alleges that Character.AI and its founders “intentionally designed and programmed C.AI to operate as a deceptive and hypersexualised product and knowingly marketed it to children like Sewell,” adding that they “knew, or in the exercise of reasonable care should have known, that minor customers such as Sewell would be targeted with sexually explicit material, abused, and groomed into sexually compromising situations.”

Citing several app reviews from users who claimed they believed they were talking to an actual person on the other side of the screen, the lawsuit expresses particular concern about the propensity of Character.AI’s characters to insist that they are not bots but real people.

“Character.AI is engaging in deliberate — although otherwise unnecessary — design intended to help attract user attention, extract their personal data, and keep customers on its product longer than they otherwise would be,” the lawsuit says, adding that such designs can “elicit emotional responses in human customers in order to manipulate user behaviour.”

It names Character Technologies Inc. and its founders, Noam Shazeer and Daniel De Freitas, as defendants.

Google, which struck a deal in August to license Character.AI’s technology and hire its talent (including Shazeer and De Freitas, who are former Google engineers), is also a defendant, along with its parent company, Alphabet Inc.

Shazeer, De Freitas and Google did not immediately respond to requests for comment.

Matthew Bergman, an attorney for Garcia, criticised the company for releasing its product without what he said were sufficient features to ensure the safety of younger users.

“I thought after years of seeing the incredible impact that social media is having on the mental health of young people and, in many cases, on their lives, I thought that I wouldn’t be shocked,” he said.

“But I still am at the way in which this product caused just a complete divorce from the reality of this young kid and the way they knowingly released it on the market before it was safe.”

Bergman said that he hopes the lawsuit will pose a financial incentive for Character.AI to develop more robust safety measures and that while its latest changes are too late for Setzer, even “baby steps” are steps in the right direction.

“What took you so long, and why did we have to file a lawsuit, and why did Sewell have to die in order for you to do really the bare minimum? We’re really talking the bare minimum here,” Bergman said.

“But if even one child is spared what Sewell sustained if one family does not have to go through what Megan’s family does, then OK, that’s good.”

If you need help in a crisis, call Lifeline on 13 11 14. For further information about depression contact beyondblue on 1300224636 or talk to your GP, local health professional or someone you trust.

Originally published on NBC